Barcelona hosts plenty of technology conferences. However, MWC 2026 this year, was different. It has functioned as a pressure test for where infrastructure industries think the next decade is going.

The real conversations were happening around AI infrastructure, network intelligence, edge computing, and the systems required to support a world where machines process video continuously.

The event was anticipated to draw around 100,000 participants across telecom operators, cloud providers, semiconductor companies, and software vendors. Being a reminder that the mobile industry now sits at the intersection of several digital economies, not just device manufacturing.

The most interesting implication of that convergence concerns video.

Video has become a shared infrastructure challenge spanning telecom, AI systems, cloud computing, and device intelligence. MWC 2026 made that shift impossible to ignore.

Video: The Largest Systems Problem in Tech

The internet has been drifting toward video dominance for years. What changed is the nature of the workload.

Streaming movies is relatively predictable. The next wave of video traffic is not.

Think about the workloads possibly emerging simultaneously:

- Live commerce streams with real-time purchasing overlays.

- Industrial cameras feeding machine-vision models in factories.

- AR collaboration sessions where multiple users interact inside the same digital environment.

- AI assistants interpreting live video feeds from phones and wearable devices.

Each of those systems generates massive video data flows while requiring extremely low latency. That combination stresses every layer of digital infrastructure.

Industry analysts estimate that video already represents the majority of global internet traffic and continues to grow as immersive and AI-driven services emerge.

At MWC 2026, the implications showed up everywhere. Network vendors discussed AI-driven traffic optimization. Semiconductor companies emphasized on-device AI processing for video streams. Cloud providers talked about distributed inference systems designed to process visual data closer to the user.

None of these conversations belonged exclusively to the media industry.

That is precisely the point.

AI Turned Video Into a Computational Problem

One theme echoed repeatedly across demonstrations and briefings. Video is no longer just media. It is becoming a primary input for artificial intelligence systems.

Louis Powell, Director of AI Initiatives at GSMA, said: “As AI adoption accelerates across Africa’s mobile ecosystem, safety and reliability are paramount. Through this collaboration with Zindi, we are supporting the development of practical tools and benchmarks that reflect Africa’s linguistic diversity and deployment realities. Strengthening AI trust and safety is essential to unlocking the full potential of AI for inclusive digital growth.”

Several vendors showcased multimodal AI models capable of interpreting video, audio, and text simultaneously in real time. These systems allow devices to analyze live environments, recognize objects, translate spoken language, and respond conversationally.

Technically impressive. But the deeper implication matters more.

Once AI models begin treating video as continuous data input, the entire architecture surrounding video has to change.

Frames must be processed instantly rather than buffered. Data often needs to remain close to the device for privacy and latency reasons. Network capacity becomes part of the AI pipeline.

In other words, video becomes less like entertainment and more like a sensor network.

Real-Time Video Processing Inside the Network

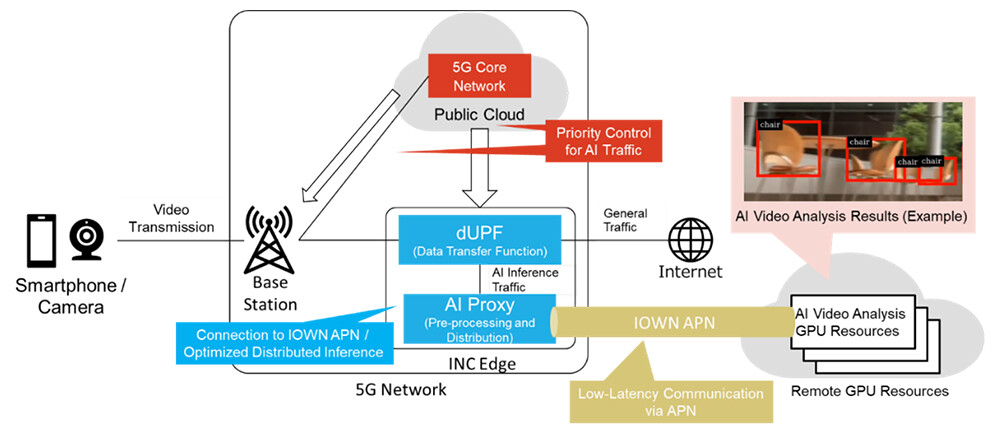

A relevant example appeared just days before MWC when NTT and NTT DOCOMO demonstrated a system that analyzes live video streams directly inside the network rather than sending them to distant cloud servers.

The architecture connects distributed GPU resources to a 5G network through what the companies call an in-network computing edge, allowing AI models to process camera data with extremely low latency.

In their demonstration, video captured by devices was transmitted through the network and analyzed in real time using remote GPU infrastructure, achieving response times fast enough for applications such as autonomous robot navigation.

Source: NTT News Release

The significance is architectural. Instead of treating video as content to be delivered to viewers, the system treats video as sensor input for machines. A robot captures visual data. The network routes it to distributed compute resources.

AI models interpret the scene and return instructions instantly. NTT describes this approach as part of the infrastructure required for future 6G systems that support robotics, XR environments, and AI-driven services operating continuously in the physical world.

Telecom Operators are Moving Up the Stack

Telecom executives have spent years worrying about commoditization. If networks simply transport data, the value eventually concentrates elsewhere.

AI-driven network architecture may change that equation.

Several telecom vendors at MWC demonstrated systems designed to automate network operations using machine learning. These platforms dynamically allocate bandwidth, optimize traffic flows, and prioritize latency-sensitive workloads such as immersive media or industrial video streams.

Consider a live XR concert or global esports broadcast. If latency fluctuates by even a few milliseconds, the experience degrades immediately.

Networks capable of prioritizing those workloads dynamically become part of the product itself.

Telecom companies stop being passive carriers. They become active infrastructure providers for video experiences.

The Ecosystem is Still Fragmented

The optimism around AI-native infrastructure masks a less convenient reality. The industry has not agreed on how this new architecture should actually work.

Telecom operators are building their own edge compute platforms. Cloud providers want those workloads to run inside hyperscale environments.

Chip manufacturers are embedding AI inference engines directly into devices. Software vendors are attempting to orchestrate everything through new operating layers.

That fragmentation explains the surge of partnership announcements surrounding MWC. Semiconductor companies aligning with telecom vendors. Network providers are integrating cloud platforms into their infrastructure stacks.

Everyone sees the same opportunity. However, nobody fully controls the ecosystem yet, and historically, infrastructure transitions rarely unfold cleanly.

Marketing Will Feel the Impact Earlier Than Expected

Marketing teams usually encounter infrastructure change indirectly. New platforms appear. New formats emerge. Eventually, new advertising models follow.

Video may accelerate that timeline.

If AI systems can generate and modify video dynamically, the idea of a fixed piece of content starts to look outdated. Real-time personalization becomes possible at the visual level.

Meanwhile, ultra-low latency networks enable interactive formats that blend commerce, entertainment, and communication.

Live shopping environments. Virtual product demonstrations inside mixed-reality spaces. Video streams that respond to viewer behavior as they watch.

Those capabilities require collaboration between industries that historically barely spoke to each other.

Telecom networks. Cloud infrastructure providers. AI model developers. Media platforms.

The marketing ecosystem will end up sitting on top of that stack, whether it planned to or not.

Barcelona Offered a Preview of the Next Media Economy

MWC has always been a forward-looking event. Not because every demo becomes reality, but because infrastructure industries reveal their long-term priorities there.

This year’s priority was unmistakable.

Video is no longer just a format delivered through digital platforms. It is becoming a shared computational workload that spans networks, AI systems, and cloud infrastructure.

FAQs

1. Why is video becoming a strategic infrastructure layer for digital services?

Video is increasingly used as operational data for AI systems, analytics platforms, and immersive digital experiences. This shift requires coordination between telecom networks, cloud infrastructure, and AI platforms to process visual data quickly and reliably.

2. How are telecom networks influencing the future of video platforms?

Telecom networks are evolving beyond basic connectivity. With edge computing and AI-driven network management, operators can support low-latency video services such as immersive streaming, real-time analytics, and interactive digital experiences.

3. What role does artificial intelligence play in modern video systems?

Artificial intelligence enables machines to analyze, generate, and personalize video content. Multimodal AI models can interpret visual scenes, speech, and contextual data simultaneously, supporting applications such as automated video insights and intelligent digital assistants.

4. Why are cross-industry partnerships emerging in the video ecosystem?

Advanced video services require multiple technology layers, including connectivity, compute infrastructure, AI models, and devices. Telecom operators, cloud providers, semiconductor companies, and software platforms are collaborating to build these integrated ecosystems.

5. How will AI-driven video experiences impact enterprise marketing strategies?

AI-enabled video technologies allow brands to create interactive and personalized content experiences. This includes dynamic video messaging, immersive product demonstrations, and real-time audience engagement powered by AI and high-performance network infrastructure.

Discover the trends shaping tomorrow’s marketing – join the leaders at MarTech Insights today.

For media inquiries, you can write to our MarTech Newsroom at news@intentamplify.com